AsgardBench— Evaluating Visually Grounded Interactive Planning Under Minimal Feedback

- Andrea Tupini ,

- Lars Liden ,

- Reuben Tan ,

- Yu Wang ,

- Jianfeng Gao

ArXiv | , Vol 2603(15888)

With AsgardBench we aim to evaluate visually grounded, high-level action sequence generation and interactive planning, focusing specifically on plan adaptation during execution based on visual observations rather than navigation or low-level manipulation. In the landscape of embodied AI benchmarks, AsgardBench targets the capability category of interactive planning, which is more sophisticated than offline high-level planning as it requires agents to revise plans in response to environmental feedback, yet remains distinct from low-level execution.

Unlike prior embodied AI benchmarks that conflate reasoning with navigation or provide rich corrective feedback that substitutes for perception, AsgardBench restricts agent input to images, action history, and lightweight success/failure signals, isolating interactive planning in a controlled simulator without low-level control noise.

The benchmark contains 108 task instances spanning 12 task types, each systematically varied through object state, placement, and scene configuration. These controlled variations create conditional branches in which a single instruction can require different action sequences depending on what the agent observes, emphasizing conditional branching and plan repair during execution.

Our evaluations of leading vision language models show that performance drops sharply without visual input, revealing weaknesses in visual grounding and state tracking that ultimately undermine interactive planning. Our benchmark zeroes in on a narrower question: can a model actually use what it sees to adapt a plan when things do not go as expected?

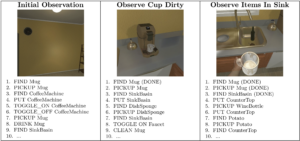

Figure 1: Agent observations and corresponding action plans in AsgardBench. Below each

image is the action plan generated from that observation. This illustrates how AsgardBench requires agents

to update or change their plans based on new visual evidence rather than following a fixed action sequence.