Fast Quantification of Uncertainty and Robustness with Variational Bayes

In Bayesian analysis, the posterior follows from the data and a choice of a prior and a likelihood. These choices may be somewhat subjective and reasonably vary over some range. Thus, we wish to measure…

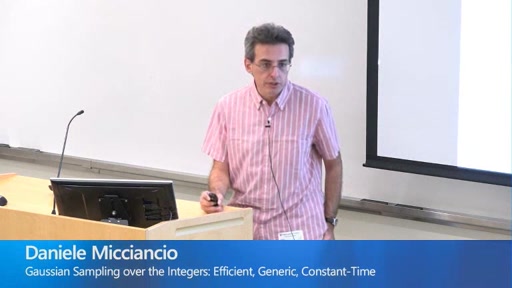

Gaussian Sampling over the Integers: Efficient, Generic, Constant-Time

Sampling integers with Gaussian distribution is a fundamental problem that arises in almost every application of lattice cryptography, and it can be both time consuming and challenging to implement. Most previous work has focused on…

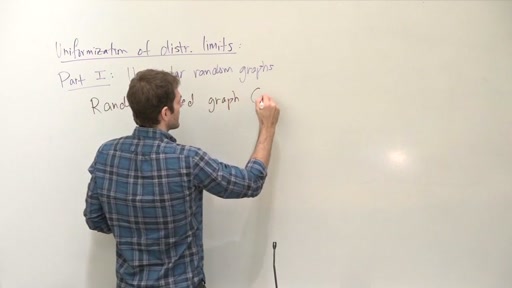

Uniformization of distributional limits of graphs

Benjamini and Schramm (2001) showed that distributional limits of finite planar graphs with uniformly bounded degrees are almost surely recurrent. The major tool in their proof is a lemma which asserts that for a limit…

Accelerating Stochastic Gradient Descent

There is widespread sentiment that it is not possible to effectively utilize fast gradient methods (e.g. Nesterov’s acceleration, conjugate gradient, heavy ball) for the purposes of stochastic optimization due to their instability and error accumulation,…

Foundations of Optimization

Optimization methods are the engine of machine learning algorithms. Examples abound, such as training neural networks with stochastic gradient descent, segmenting images with submodular optimization, or efficiently searching a game tree with bandit algorithms. We…