Abstracts: October 9, 2023

Researcher Dr. Sheng Zhang joins “Abstracts”—your source for cutting-edge research in brief—to discuss a recent paper on distilling large language models into smaller, more efficient ones capable of excelling in broad application classes.

MEGA: Multilingual Evaluation of Generative AI

On Surgical Fine-tuning for Language Encoder

LLaVA: Large Language and Vision Assistant

LLaVA is an open-source project, collaborating with research community to advance the state-of-the-art in AI. LLaVA represents the first end-to-end trained large multimodal model (LMM) that achieves impressive chat capabilities mimicking spirits of the multimodal…

Agent AI

Agent-based multimodal AI systems are becoming a ubiquitous presence in our everyday lives. A promising direction for making these systems more interactive is to embody them as agents within specific environments. The grounding of large…

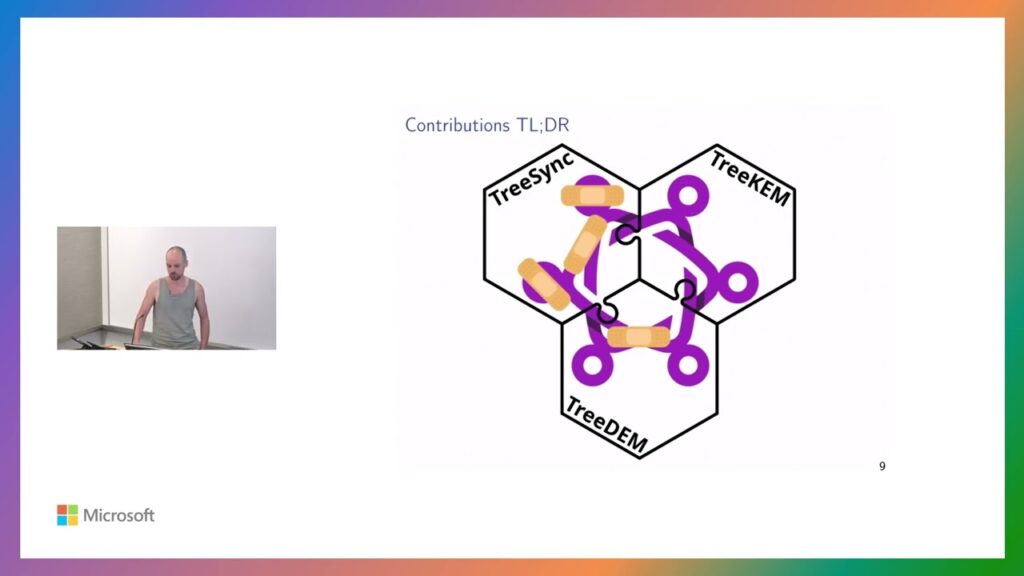

End-to-End Encrypted Group Chats with MLS: Design, Implementation and Verification

MLS is a new IETF standard that deals with secure, end-to-end encrypted group messaging. In this work, recently awarded the Internet Defense Prize and a Distinguished Paper Award at USENIX, Théophile will describe how the…