Microsoft Research Blog

Orca 2: Teaching Small Language Models How to Reason

At Microsoft, we’re expanding AI capabilities by training small language models to achieve the kind of enhanced reasoning and comprehension typically found only in much larger models.

Microsoft Research Blog

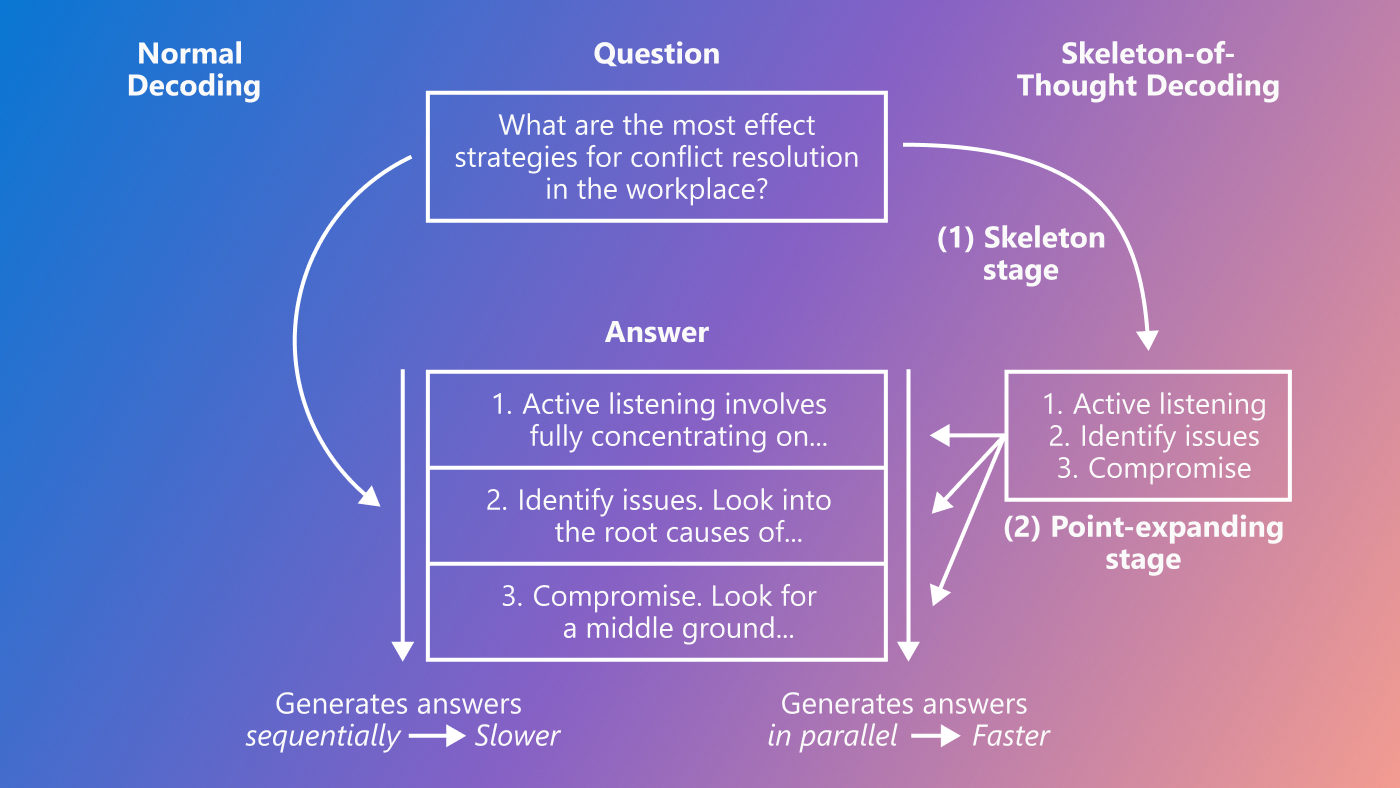

Skeleton-of-Thought: Parallel decoding speeds up and improves LLM output

This research was accepted by the 2024 International Conference on Learning Representations. Large language models (LLMs) such as LLaMA and OpenAI’s GPT-4 are revolutionizing technology. However, one of the common complaints about LLMs is their…