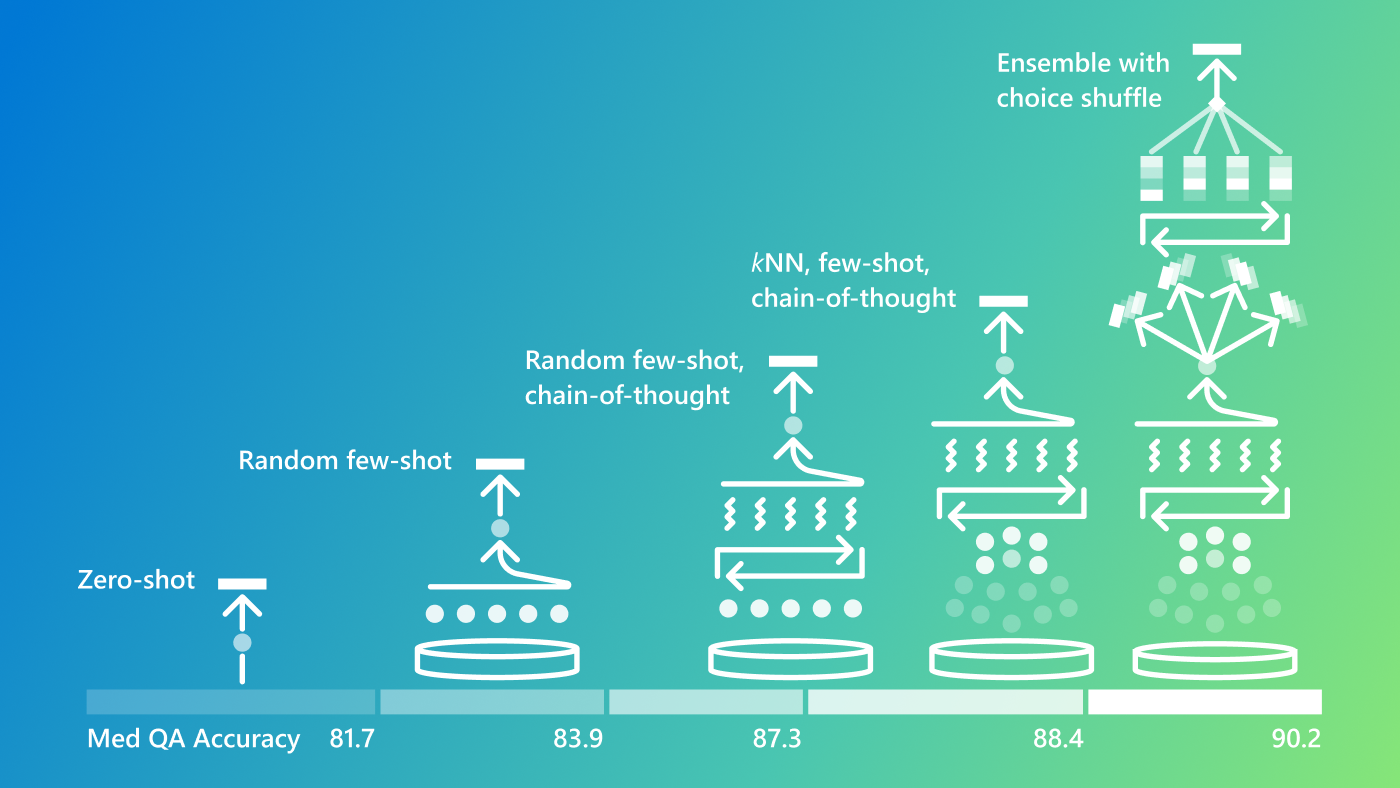

Advances in run-time strategies for next-generation foundation models

Discover the most effective run-time strategies on the OpenAI o1-preview model, improving accuracy in medical language tasks.

RAD-DINO model

RAD-DINO is a vision transformer model trained to encode chest X-rays using the self-supervised learning method DINOv2 (opens in new tab). RAD-DINO is described in detail in RAD-DINO: Exploring Scalable Medical Image Encoders Beyond Text Supervision (F.…

MAIRA-2 model

MAIRA-2 is a multimodal transformer designed for the generation of grounded or non-grounded radiology reports from chest X-rays. It is described in more detail in MAIRA-2: Grounded Radiology Report Generation (S. Bannur, K. Bouzid et al.,…

RadFact: An LLM-based Evaluation Metric for AI-generated Radiology Reporting

RadFact is a framework for the evaluation of model-generated radiology reports given a ground-truth report, with or without grounding. Leveraging the logical inference capabilities of large language models, RadFact is not a single number but a suite of…

RadPhi-3: Small Language Models for Radiology

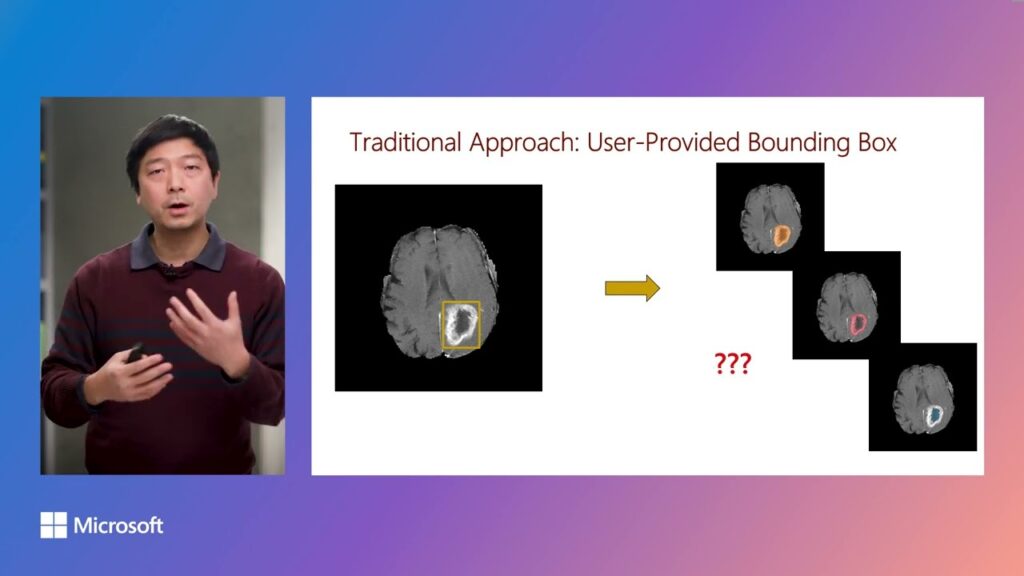

Introducing BiomedParse, a groundbreaking foundation model for biomedical image analysis

Image analysis is fundamental for clinical diagnostics and biomedical discovery. In this video, we introduce BiomedParse, a biomedical foundation model for holistic image analysis that can jointly conduct recognition, detection, and segmentation for 64 major…

BiomedParse: A foundation model for smarter, all-in-one biomedical image analysis

BiomedParse reimagines medical image analysis, integrating advanced AI to capture complex insights across imaging types—a step forward for diagnostics and precision medicine.