Publication

STEAM: Observability-Preserving Trace Sampling

Tool

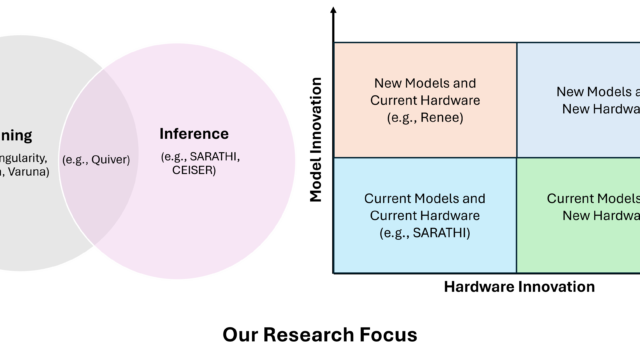

Sarathi-Serve

Sarathi-Serve (a research prototype) is a high throughput and low-latency LLM serving framework. This repository contains a benchmark suite for evaluating LLM performance from a systems point of view. It contains various workloads and scheduling…

Microsoft Research Blog

Research Focus: Week of November 22, 2023

A new deep-learning compiler for dynamic sparsity; Tongue Tap could make tongue gestures viable for VR/AR headsets; Ranking LLM-Generated Loop Invariants for Program Verification; Assessing the limits of zero-shot foundation models in single-cell biology.